Philosophy has always intrigued me and René Descartes is one of my personal favorite philosophers. Cogito ergo sum “I think, therefore I am”. The moment we stop thinking is the moment we stop existing. So, today I decided to learn something new. I wanted to setup a Stretch Database in SQL Server 2016.

Just about everything in SQL Server can be accomplished by either the SSMS GUI or Transact SQL commands. Anytime, I attempt something for the first time I always use the GUI, then learn the T-SQL commands.

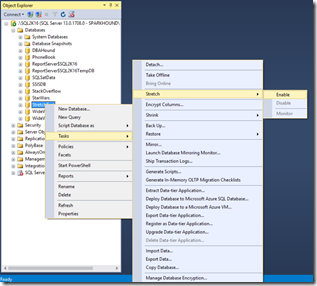

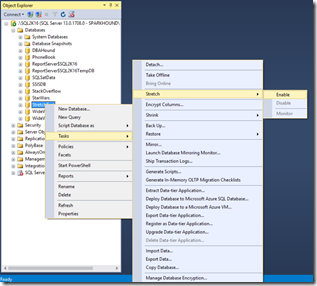

I began by right clicking on my [StretchTest] database and selecting TASKS >> STRETCH >> ENABLE.

This will launch the “Enable Stretch Wizard. First step in the wizard is the the Introduction. First thing I noticed was the the statement: “Once the data is migrated to Azure, unique and primary keys will not be enforced on Azure” Really? I wonder what else will be “not enforced” or not compatible with Azure. Time to look up my friendly neighborhood BOL, Limitations for Stretch Database. Wow, that’s a good number of limitations; however this is a simple test so I am not worried.

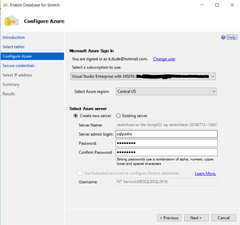

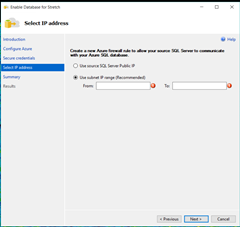

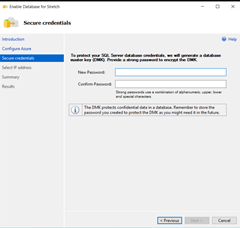

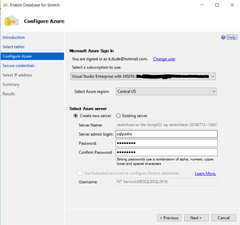

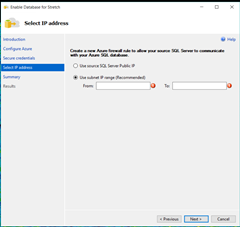

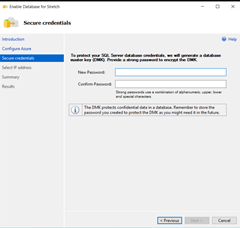

Next we have to connect to an Azure Subscription. The wizard step allows you to configure your SQL Azure database environment, select the Azure region (always pick one closest to you) and you get to choose between a preconfigured Azure server or allow the wizard to create one. I chose to allow SSMS to create a new Azure server. Next you have to create a database master key (DMK) to protect your Azure data. And finally you need to provide your IP Address (remember your external IP, not your machine IP) so SSMS can setup firewall rules for you.

Then you get the customary SQL Summary page before you execute the commands. Now that my database has been stretched, I added a table and data using this schema:

CREATE TABLE [dbo].[tblUsers](

[ID] [int] IDENTITY(1,1) NOT NULL,

[FirstName] [varchar](50) NULL,

[LastName] [varchar](50) NULL,

[Email] [varchar](100) NULL,

[DateEntered] smalldatetime null,

CONSTRAINT [PK_tblUsers] PRIMARY KEY CLUSTERED

(

[ID] ASC

) ON [PRIMARY]

)

GO

I not only wanted to test the Primary Key limitation; but I also wanted to test the Filtered Stretch on the Date Entered Column. I populated the table with 11,000 records using the website http://mockaroo.com.

After the tblUsers table was populated, I enabled the Stretch Table wizard by right clicking on the table and selecting STRETCH >> ENABLE. After the Introduction screen you get to select which tables you would like to stretch to Azure. The GUI screen shows you all the tables and if there will be any “warnings” about stretching, the option to filter, number of rows in the table and the size of the table.

The first time I executed this I did not use the Stretch Filter and stretched the entire table. It worked perfectly. I could use SSMS to connect to my remote SQL Server and query the Azure table directly and could see there were 11,000 rows.

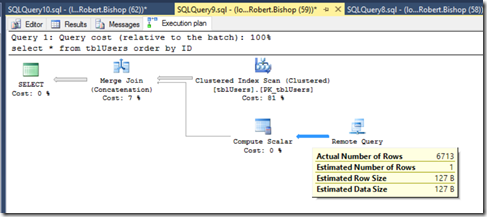

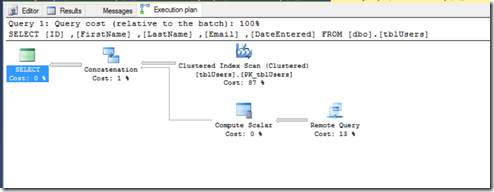

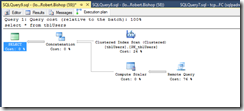

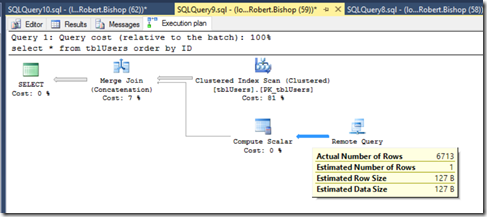

Executing a simple SELECT query against the local database produced this Query Execution plan:

As you can see, we now have a new path in our query plan with an operator called “Remote Query”. Basically the local server queries the remote query then using the local Primary key Concatenates them back together to produce the desired result. So can we update the data?

Nope, sure can’t. Once the data lives in Azure, the data is READ ONLY.

Next I wanted to add the filtering on  the DateEntered column so I can edit more recent data. I disabled Stretch and re-enabled it using the filters this time. I received error after error after error. According to BOL this query is not a valid filtering query. According to BOL, the date parameter needs to include the format value. At least that was the case with mine.

the DateEntered column so I can edit more recent data. I disabled Stretch and re-enabled it using the filters this time. I received error after error after error. According to BOL this query is not a valid filtering query. According to BOL, the date parameter needs to include the format value. At least that was the case with mine.

/* INCORRECT */

CREATE FUNCTION dbo.fn_example5(@column1 datetime)

RETURNS TABLE

WITH SCHEMABINDING

AS

RETURN SELECT 1 AS is_eligible

WHERE @column1 < CONVERT(datetime, '1/1/2014')

GO

/* CORRECT */

CREATE FUNCTION dbo.fn_example5(@column1 datetime)

RETURNS TABLE

WITH SCHEMABINDING

AS

RETURN SELECT 1 AS is_eligible

WHERE @column1 < CONVERT(datetime, '1/1/2014',101)

GO

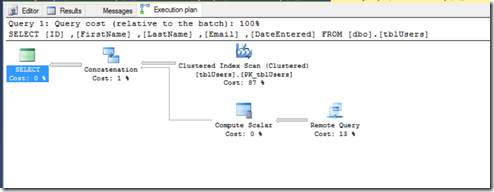

After initiating the filter, I queried the remote server directly and noticed there were

6713 rows found in Azure

and 4287 rows found in the local database. Exactly what I expected. Again, the query execution plan has to use “Remote Query” and Concatenate the results to produce the desired results.

Final Thoughts on Stretch Database

The SSMS has designed the Stretch Wizards to be very effective and very easy to use. However, when you “Disable” a stretch database it does not delete the table/database in Azure which means you will still incur charges for storage. And if you re-enable Stretch on a database it creates a 2nd database in Azure.

With the READ-ONLY limitation from the Azure side of Stretch database, you better make 100% sure you don’t need to edit anything in Azure. The only way to make any changes to the Azure side of the data is to Disable and “bring the data back” to the local machine and this incurs charges on the Azure side. Then you make your changes to the data, and re-enable it for Stretch. Which again, created another database in your Azure server.

The pricing for Azure SQL Stretch database seems to be a little steep. At the lowest performance level offered, it is ~$1860 per month! Microsoft calculates what they call DSU (Database Stretch Units) which “represents thee power of the query and is quantified by workload objectives: how fast rows are written, read and computed against.” https://azure.microsoft.com/en-us/pricing/details/sql-server-stretch-database/

All in all, Stretch Database is a very cool feature for archiving older data that will not change. But only if you can afford it. I would think using file groups with partitioning of data on separate drives could be a more cost effective solution for archiving than Azure stretch database. A very good learning experience.